Over the past year, there have been some pretty big improvements to Puppet. I am still running PE 2016.4.2 and the current version is 2017.3.2, so there’s lot of changes in there. Most of the changes are backwards-compatible, so an upgrade from last November’s version is not quite as bad as it sounds, and I definitely want to start using the new features and improvements. The big one for me is Hiera version 5 (new in Puppet 4.9 / PE 2016.4.5). It is backwards compatible, so you can start using it right now, but it does require some changes to take advantage of the new features. I have to upgrade the server, upgrade the agents, and then start implementing the new features! Why do I care about Hiera 5 in particular?

Hiera 3 was great, but you could only use one hiera setup on a server, regardless of how many environments were deployed on that server. This could cause problems when you wanted to change the hiera config and test it. You could not test it in a feature branch, it HAD to be promoted to affect the entire server. If you had multiple masters, you could change the config on just one, but that was about as flexible as hiera 3 would let you be. If it worked, awesome. If it broke, you could break a whole lot that needed undone before you could try again.

Hiera 5 introduces independent hierarchy configurations per environment and even per module! If you want to try out a new backend like hiera-eyaml, you can now create a new feature branch eyaml-test, update the configuration in that branch, push it, and ONLY nodes that use that environment will receive the new configuration. This is a huge help in testing changes to hiera without blowing up all your nodes.

The per module hierarchy also means that module authors can include defaults that use hiera, rather than the params.pp pattern. This makes it easier for module users to override settings. There are also improvements in the interface for those who want to create their own backends. And, best of all, hiera 5 means the name hiera is here to stay – no more confusion between “legacy” hiera and modern lookup, it’s all called hiera 5 now.

It does mean there are some deprecations to keep in mind, but they won’t actually go away until at least Puppet 6. You can use hiera 5 now and take some time to replace the deprecated bits. This does mean we can use our existing hiera 3 setup and worry about migrating it to hiera 5 later, too, which we will take advantage of.

I always prefer to upgrade to the latest version. If for some reason you’re upgrading to Puppet 4.9, be aware that Puppet 4.10 fixed PUP-7554, which caused failures when a hiera 3 format hiera.yaml was found at the base of a controlrepo or module. I kept a hiera.yaml in the root of my controlrepo for bootstrapping purposes for a long while, and if you do, you could hit that bug. Best to just move to the latest if you can.

I think most people will like Hiera 5, but there are a ton of other features (listed at the end) and even if nothing appeals specifically, it is good to stay up to date so you don’t get stuck with a really nasty upgrade process when you find a feature you really need. Please, don’t let yourself get a full year behind on updates like I did. Sometimes it’s really difficult to get out of that situation!

Puppet Enterprise Server Upgrade

I use Puppet Enterprise at both work and home these days, so I will go through the PE upgrade experience. The Puppet OpenSource install and upgrade instructions are on the same page of the documentation, so it seems pretty easy but your mileage may vary, of course.

First, take a look at your installation and make sure it’s in a known state – preferably a known good state all the way around, but at least a known one. If you have outstanding issues on the master, you need to resolve them. If some agents are failing to check in, you may want to take the time to fix them, or you could just keep track of the failures. After the upgrade, you don’t want to see an increase in failures. Once everything looks good, take a snapshot of your master(s) and a full application/OS backup if possible. If you have a distributed setup, perform this on all nodes as close to the same time as possible.

Second, download the latest version of PE (KB#0001) onto your master. Expand the tarball, cd into the directory, and run sudo ./puppet-enterprise-installer. You can provide a .pe.conf file with the -c option, or answer a few interactive questions to get started:

=============================================================

Puppet Enterprise Installer

=============================================================

2017-11-08 20:38:07,432 Running command: cp /opt/puppetlabs/server/pe_build /opt/puppetlabs/server/pe_build.bak

2017-11-08 20:38:07,480 Running command: cp /opt/puppetlabs/puppet/VERSION /opt/puppetlabs/server/puppet-agent-version.bak

## We've detected an existing Puppet Enterprise 2016.5.2 install.

Would you like to proceed with text-mode upgrade/repair? [Yn]y

## We've found a pe.conf file at /etc/puppetlabs/enterprise/conf.d/pe.conf.

Proceed with upgrade/repair of 2016.5.2 using the pe.conf at /etc/puppetlabs/enterprise/conf.d/pe.conf? [Yn]y

The install takes a bit of time (30 minutes on my lab install). Once the upgrade is done, you’ll be directed to run puppet agent -t (with sudo of course). If you have additional compile masters or ActiveMQ hubs and spokes, also run the commands in steps 4 and 5 as well.

You should now be able to log into the Console and see the status of your environment. You will hopefully find Intentional Changes on most of your nodes and zero or no failures (if both are encountered in a run, Intentional Changes “wins” on the Console; let every node run at least twice to see if it moves back to Green or Red before continuing).

If you do encounter failures, you will have to analyze each issue to see if it’s related to the upgrade and something you can fix, or if it’s time to roll back. If you do roll back, make sure you roll back ALL the PE components, including the PuppetDB, so you don’t leave cruft somewhere. I experienced one issue in the lab described in PUP-7878, resolved by a reboot of the master after the upgrade.

If everything is good, then it is time to proceed to upgrading the Agents.

Agent Upgrades

The Puppet docs provide instructions for upgrading Agents in a variety of methods. I prefer to use the module puppetlabs/puppet_agent, as I’ve discussed before (OpenSource, PE Linux and PE Windows clients). My experience is using my profile module and hiera data in the controlrepo, Puppet’s instructions use the Console Classifier. It really does not matter how you do this, but I did find an issue with the Puppet docs (DOCUMENT-763) – after classifying, you must set the parameter puppet_agent::package_version or no upgrade occurs for agents already running Puppet 4 or 5. Set it to 5.3.3(obtained by running puppet --version on the master, which received the latest agent during the upgrade). Here’s how to do that in hiera:

puppet_agent::package_version: '5.3.3'

The next two agent runs will show changes. I ran my tests directly using ssh on a Linux host and it looked like this:

# First run upgrades Puppet 4 to 5

[rnelson0@build03 controlrepo:pe201732]$ sudo puppet agent -t --environment pe201732

Info: Using configured environment 'pe201732'

Info: Retrieving pluginfacts

Info: Retrieving plugin

Info: Loading facts

Info: Caching catalog for build03.nelson.va

Info: Applying configuration version '1510241461'

Notice: /Stage[main]/Puppet_agent::Osfamily::Redhat/Yumrepo[pc_repo]/baseurl: baseurl changed 'https://yum.puppetlabs.com/el/$releasever/PC1/x86_64' to 'https://puppet.nelson.va:8140/packages/2017.3.2/el-7-x86_64'

Notice: /Stage[main]/Puppet_agent::Osfamily::Redhat/Yumrepo[pc_repo]/sslcacert: defined 'sslcacert' as '/etc/puppetlabs/puppet/ssl/certs/ca.pem'

Notice: /Stage[main]/Puppet_agent::Osfamily::Redhat/Yumrepo[pc_repo]/sslclientcert: defined 'sslclientcert' as '/etc/puppetlabs/puppet/ssl/certs/build03.nelson.va.pem'

Notice: /Stage[main]/Puppet_agent::Osfamily::Redhat/Yumrepo[pc_repo]/sslclientkey: defined 'sslclientkey' as '/etc/puppetlabs/puppet/ssl/private_keys/build03.nelson.va.pem'

Notice: /Stage[main]/Puppet_agent::Install/Package[puppet-agent]/ensure: ensure changed '1.9.2-1.el7' to '5.3.3-1.el7'

Notice: /Stage[main]/Puppet_enterprise::Mcollective::Server::Logs/File[/var/log/puppetlabs/mcollective]/mode: mode changed '0750' to '0755'

Notice: Applied catalog in 71.86 seconds

# Second run updates some PE components

[rnelson0@build03 controlrepo:pe201732]$ sudo puppet agent -t --environment pe201732

Info: Using configured environment 'pe201732'

Info: Retrieving pluginfacts

Info: Retrieving plugin

Info: Loading facts

Info: Caching catalog for build03.nelson.va

Info: Applying configuration version '1510241554'

Notice: /Stage[main]/Puppet_enterprise::Pxp_agent/File[/etc/puppetlabs/pxp-agent/pxp-agent.conf]/content:

--- /etc/puppetlabs/pxp-agent/pxp-agent.conf 2017-11-08 21:16:56.713834368 +0000

+++ /tmp/puppet-file20171109-20790-1l6y5bg 2017-11-09 15:32:45.917909748 +0000

@@ -1 +1 @@

-{"broker-ws-uris":["wss://puppet.nelson.va:8142/pcp2/"],"pcp-version":"2","ssl-key":"/etc/puppetlabs/puppet/ssl/private_keys/build03.nelson.va.pem","ssl-cert":"/etc/puppetlabs/puppet/ssl/certs/build03.nelson.va.pem","ssl-ca-cert":"/etc/puppetlabs/puppet/ssl/certs/ca.pem","loglevel":"info"}

\ No newline at end of file

+{"broker-ws-uris":["wss://puppet.nelson.va:8142/pcp2/"],"pcp-version":"2","master-uris":["https://puppet.nelson.va:8140"],"ssl-key":"/etc/puppetlabs/puppet/ssl/private_keys/build03.nelson.va.pem","ssl-cert":"/etc/puppetlabs/puppet/ssl/certs/build03.nelson.va.pem","ssl-ca-cert":"/etc/puppetlabs/puppet/ssl/certs/ca.pem","loglevel":"info"}

\ No newline at end of file

Info: Computing checksum on file /etc/puppetlabs/pxp-agent/pxp-agent.conf

Info: /Stage[main]/Puppet_enterprise::Pxp_agent/File[/etc/puppetlabs/pxp-agent/pxp-agent.conf]: Filebucketed /etc/puppetlabs/pxp-agent/pxp-agent.conf to puppet with sum cad3d2db7a7a912a1734b7e8afa23037

Notice: /Stage[main]/Puppet_enterprise::Pxp_agent/File[/etc/puppetlabs/pxp-agent/pxp-agent.conf]/content: content changed '{md5}cad3d2db7a7a912a1734b7e8afa23037' to '{md5}a19b53e1586a748ba488ee4dcd7afc3c'

Info: /Stage[main]/Puppet_enterprise::Pxp_agent/File[/etc/puppetlabs/pxp-agent/pxp-agent.conf]: Scheduling refresh of Service[pxp-agent]

Notice: /Stage[main]/Puppet_enterprise::Pxp_agent::Service/Service[pxp-agent]: Triggered 'refresh' from 1 event

Notice: /Stage[main]/Puppet_enterprise::Mcollective::Server/File[/etc/puppetlabs/mcollective/server.cfg]/content: [diff redacted]

Info: Computing checksum on file /etc/puppetlabs/mcollective/server.cfg

Info: /Stage[main]/Puppet_enterprise::Mcollective::Server/File[/etc/puppetlabs/mcollective/server.cfg]: Filebucketed /etc/puppetlabs/mcollective/server.cfg to puppet with sum 7a8d59f271273738a51b4cf05ee6b33a

Notice: /Stage[main]/Puppet_enterprise::Mcollective::Server/File[/etc/puppetlabs/mcollective/server.cfg]/content: changed [redacted] to [redacted]

Info: /Stage[main]/Puppet_enterprise::Mcollective::Server/File[/etc/puppetlabs/mcollective/server.cfg]: Scheduling refresh of Service[mcollective]

Notice: /Stage[main]/Puppet_enterprise::Mcollective::Service/Service[mcollective]: Triggered 'refresh' from 1 event

Notice: Applied catalog in 6.02 seconds

I assume the PE component updates are based on facts only present with Puppet 5, facts that would not be present during the first run while the agent is still Puppet 4. Subsequent runs are stable.

I do not have a Windows agent to test with in my lab, I assume it looks similar but cannot verify. Be sure to test at least one Windows agent before releasing this change across your entire Windows fleet.

New Features

I have skipped from 2016.4 to 2017.3, which means I have missed out on new features in four major versions: 2016.5, 2017.1, 2017.2, and 2017.3. Here are some of the big features from the release notes:

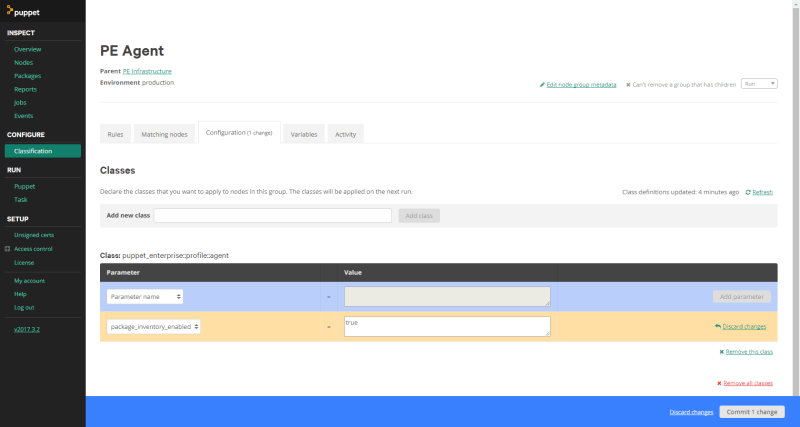

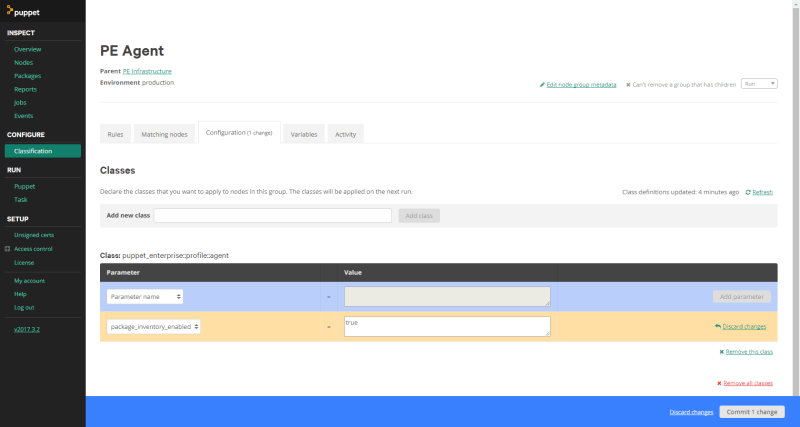

I mentioned Hiera 5 already, which I’ll discuss further in another post. I also want to immediately enable the Package Inventory. As described, I can update the Classification of the PE Agent node group to include puppet_enterprise::profile::agent with package_inventory_enabled set to true and committing the change.

While it takes affect immediately, your agents need two runs to show up: the first changes the setting so package data is collected and the second actually collects the list. Once that happens with at least one node, you’ll start seeing data populate on the Inspect > Packages page.

I do not have need for High Availability myself, but that seems really cool. In the past, it’s been quite the pain in the behind to automate yourself. I have not used Orchestrator in anger before, and I do Hiera overrides in my control repo, almost ignoring the Console Classifier otherwise, so I probably will not be exploring them very well. However, I’m really excited about Tasks, that’s something I hope to explore during the winter break, perhaps by upgrading bash across all my systems!

Summary

Today we looked at why we want to upgrade to the latest Puppet and upgraded a Puppet Enterprise monolithic master and some linux agents. It’s not that hard! We also staked out features that we want to investigate and turned on the Package Inventory. There are a lot more changes than I listed, along with tons of fixed bugs and smaller improvements, so I recommend reviewing the release notes for each version to see what interests you.

I hope to be able to look into Hiera 5 and Tasks soon, look for new blog posts on those! Let me know if there’s anything else you’d like to see discussed in the comments or on twitter. Thanks!