Over the past few days, I’ve had to set up an NFS export for use with vSphere. I found myself frustrated with the vSphere docs because they only seem to cover the vSphere side of the house – when discussing the export that vSphere will use, the docs simply said, “Ask your vendor.” If you’re enabling NFS on your SAN and they have a one-click setup for you or a document with settings to enable, great, but if you’re setting up on a bare metal server or VM running Linux like me, you don’t have a vendor to ask.

Since I didn’t have a guide, I took a look at what happens when you enable NFS on a Synology. I don’t know if this is optimal, but this works for many people with the defaults. You can replicate this in Control Panel -> Shared Folders -> Highlight and Edit -> NFS Permissions. Add a new rule, add a hostname or IP entry, and hit OK. Here’s what the defaults look like:

Let’s take a look at what happened in the busybox shell. SSH to your Synology as root with the admin password. Take a look at the permissions on the mount path (e.g. /volume1/rnelson0) and the contents of /etc/exports.

(there is no carriage return at the end of the file, the ‘ds214>’ is part of the prompt not the exports contents)

A working mode for the directory is ‘0777’ and there’s a long string of nfs options. They are described in detail in the exports(5) man page. Here’s a high-level summary of each:

- rw: Enable writes to the export (default is read-only).

- async: Allow the NFS server process to accept additional writes before the current writes are written to disk. This is very much a preference and has potential for lost data.

- no_wdelay: Do not delay writes if the server suspects (how? I don’t know) that another write is coming. This is a default with async so actually has no specific benefit here unless you remove async. This can have performance impacts, check whether wdelay is more appropriate.

- no_root_squash: Do not map requests from uid/gid 0 (typically root) to the anonymous uid/gid.

- insecure_locks: Do not require authentication of locking requests.

- sec=sys: There are a number of modes, sys means no cryptographic security is used.

- anonuid/anongid: The uid/gid for the anonymous user. On my Synology these are 1025/100 and match the guest account. Most Linux distros use 99/99 for the nobody account. vSphere will be writing as root so this likely has no actual effect.

I changed the netmask and anon(u|g)id values, as it’s most likely that a linux box with a nobody user would be the only non-vSphere client. Those should be the only values you need to change; async and no_wdelay are up to your preference.

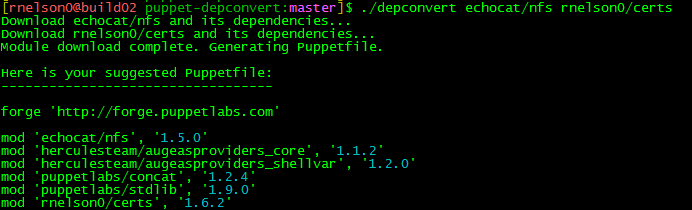

If you use Puppet, you can automate the setup of your NFS server and exports. I use the echocat/nfs module from the forge (don’t forget the dependencies!). With the assumption that you already have a /nfs mount of sufficient size in place, the following manifest will create a share with the correct permissions and export flags for use with vSphere:

node server {

include ::nfs::server

file{ '/nfs/vsphere':

ensure => directory,

mode => '0777',

}

::nfs::server::export{ '/nfs/vsphere':

ensure => 'mounted',

nfstag => 'vsphere',

clients => '10.0.0.0/24(rw,async,no_wdelay,no_root_squash,insecure_locks,sec=sys,anonuid=99,anongid=99)',

}

}

To add your new datastore to vSphere, launch the vSphere Web Client. Go to the Datastores page, select the Datacenter you want to add the export to, and click on the Related Objects tab. Select Datastores and the first icon is to add a new datastore. Select NFS and the NFS version (new to vSphere 6), give your datastore a name, and provide the name of the export (/nfs/vsphere) and the hostname/IP of the server (nfs.example.com). Continue through the wizard, checking each host that will need the new datastore.

Click Finish and you have a datastore that uses NFS! You may need to tweak the export flags for your environment, but this should be a good starting point. If you are aware of a better set of export flags, please let me know in the comments. Thanks!